Author: Elton Stoneman

All applications are distributed - even a simple web server connecting to a database has two components. That means communication is a critical part of your system. Every component needs to know how to find the other components, has to understand the security of the connection, and needs logic to handle any failures.

It would be nice to centralize all those concerns and manage them independently of the application itself, and that’s what a service mesh does. It takes the communication layer of your application and makes it into a separate entity, with a consistent way of modelling and managing the connections between all your components.

Istio 101

Istio is the best-known and most fully featured service mesh. It works by hijacking the network setup in your system, so all communication goes through network proxies. The proxies add their own logic using the rules you’ve modelled and that’s all transparent to the components making and receiving the network calls.

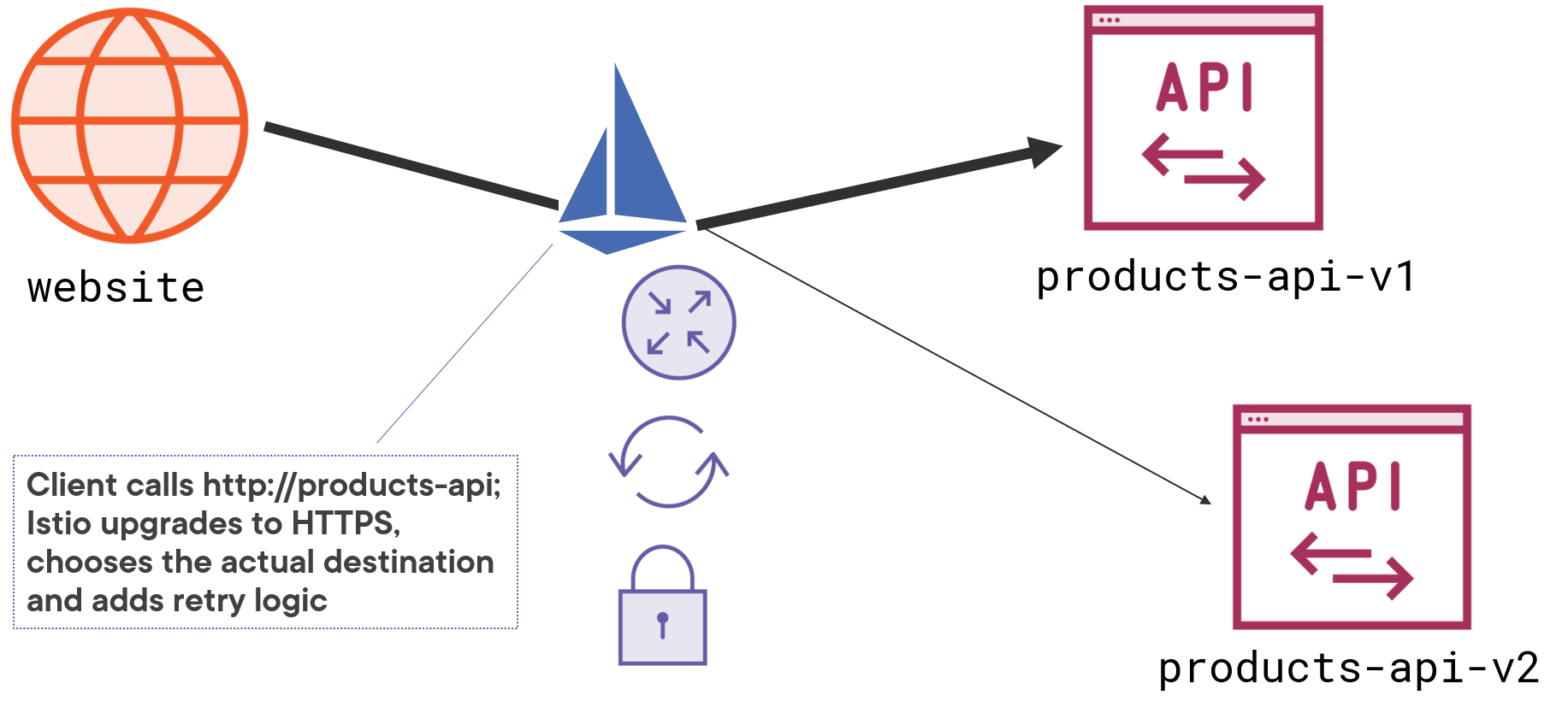

Your web application might call a REST service using plain text at the address http://products-api, but Istio intercepts the call and translates it into an encrypted request which it chooses to send to either https://products-api-v1 or https://products-api-v2. If the call fails, then Istio will automatically retry it, and the service itself is only using HTTP - Istio transparently upgrades the communication to HTTPS:

There are a whole bunch of benefits here:

your application code is simple. Network concerns don’t need to be built in to each component - encryption, certificate management and fault management can all be left to Istio;

communication is modeled consistently. You may have components in different languages communicating with different network protocols, but Istio gives you a consistent way to define all the rules;

communication is managed centrally. You don’t need any application updates to change the network configuration, it’s all done by updating the rules Istio applies.

you get observability for free. Distributed systems get more complex as you add more components. With Istio all the traffic goes through proxies which can record metrics, giving you a high level of detail on network calls without you having to write instrumentation code.

Istio can work with different platforms, but it’s typically used with Kubernetes. The flexibility of Kubernetes and the lightweight nature of containers means it’s easy for Istio to inject proxies and control the network.

Managing Apps on Kubernetes with Istio on Pluralsight covers all the details with lots of examples showing what Istio can do.

Traffic Routing and Failure Management

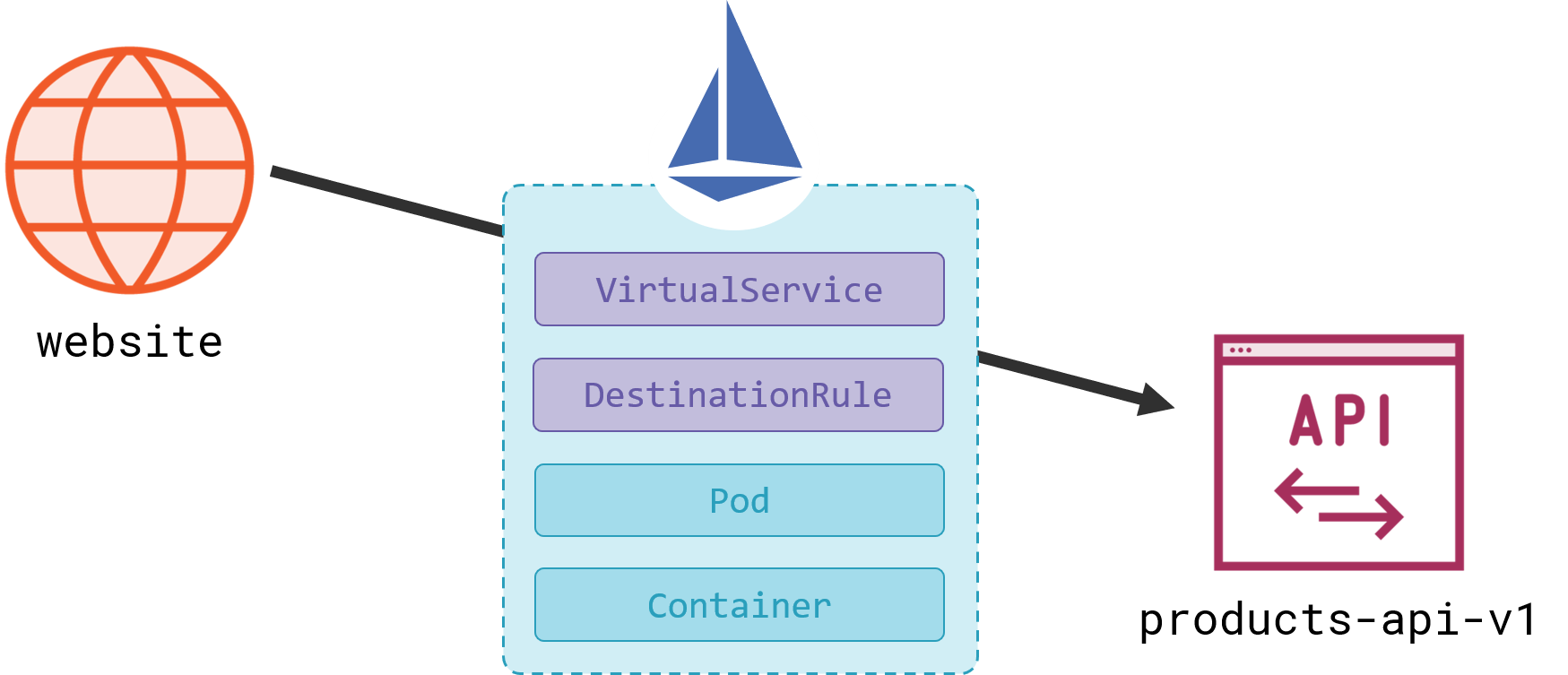

You model applications in Kubernetes using abstractions at different levels - Pods and Deployments for the compute layer, Persistent Volumes and Claims for storage, Services and Endpoints for the network. It gets complicated, and then Istio layers on more abstractions to the networking layer - VirtualServices and DestinationRules:

Moving from the 101 into the next level of detail is a hard drop with Istio, because you really need a good grasp of Kubernetes to understand how the model changes. But if you’re not familiar with Kubernetes YAML you can still understand the features Istio provides and how you enable them.

A VirtualService is used for routing traffic, it’s an abstraction between the address a client requests, and the actual destination where the traffic gets sent. It’s described in YAML like any other Kubernetes request (it’s a custom resource type which Istio installs in your cluster):

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

metadata:

name: stock-api-vs

spec:

hosts:

- stock-api

http:

- route:

- destination:

host: stock-api-internal

subset: v1

retries:

attempts: 3

This object describes the following network model:

a set of rules which get applied when an application in the service mesh makes an HTTP request to the address

stock-apithe actual destination will be one of the Pods registered as endpoints in the Kubernetes Service called

stock-api-internalPods in the Service get narrowed down to a subset called

v1, which is described in another part of the Istio modelany network failures between the client and the server application in the Pod are automatically retried up to 3 times

That’s all the configuration you need to get traffic routing and failure management for your HTTP services. Istio understands the HTTP protocol, so any responses with a status code in the 500 range are identified as a failure and retried. The client doesn’t even see the retries - it’s waiting for a response to the original call while Istio is retrying the failures on its behalf.

Retries can fix temporary issues, but if the application in a Pod is consistently failing, then retries can overload it and make the situation worse. Istio deals with that with circuit breaker functionality, which is modeled in the DestinationRule:

apiVersion: networking.istio.io/v1beta1

kind: DestinationRule

metadata:

name: stock-api-dr

spec:

host: stock-api

subsets:

- name: v1

labels:

version: v1

outlierDetection:

consecutive5xxErrors: 3

interval: 2m

baseEjectionTime: 5m

This is the second part of the same network model for the stock-api service and it specifies:

the Pods in the

v1subset are identified with a Kubernetes label - this mechanism supports blue-green and canary deployments, as you can distribute traffic to different subsets based on Pod labelsv1Pods have a circuit breaker applied (called outlier detection) - if any one Pod has 3 consecutive 500 errors within a two-minute period, then it’s removed from the subset for 5 minutes

Circuit breakers prevent retries escalating and causing cascading failures. Removing Pods from the subset means they don’t receive any traffic, so that can give them time to fix themselves - or for Kubernetes to replace them.

The YAML is not straightforward, but you get a lot of functionality with no code, and it works in the same way for any language and application platform.

Encrypting Traffic with Mutual TLS

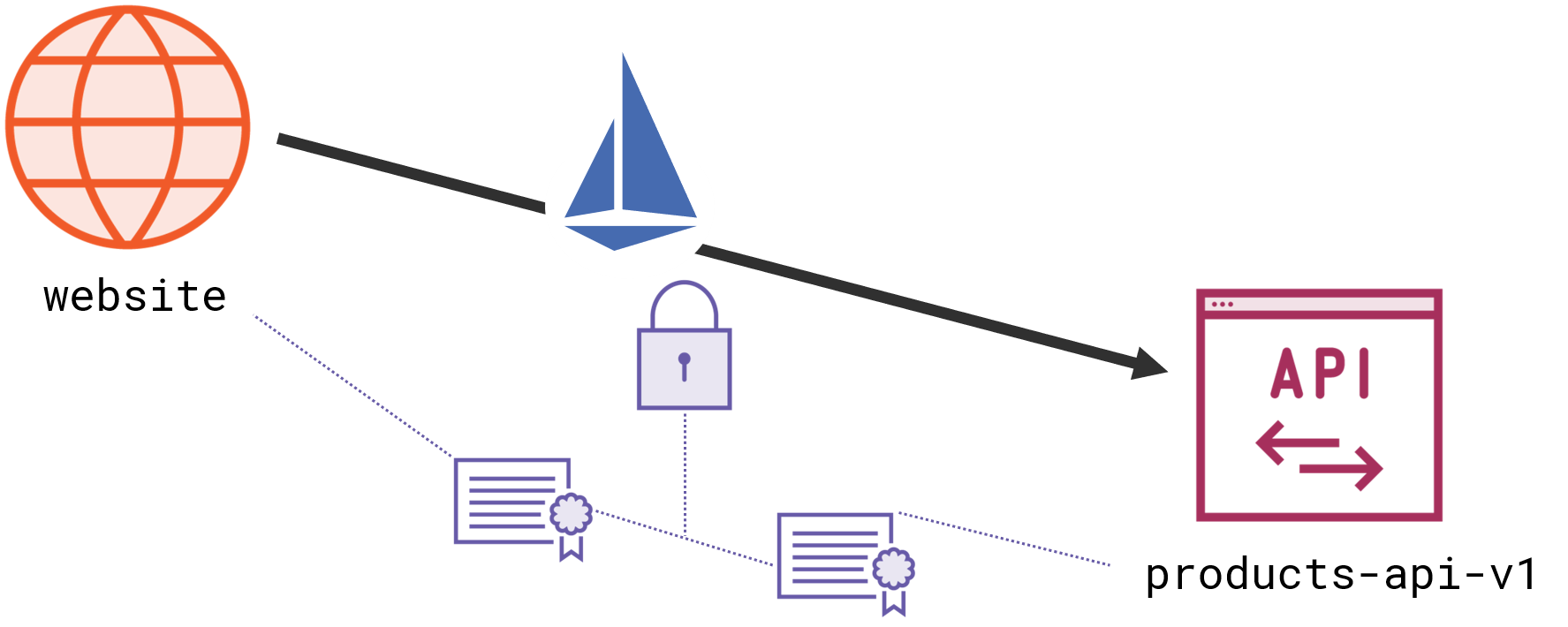

Encryption is much simpler than traffic management, because you can enable it across all your services by default. Istio supports mutual-TLS, which means clients supply a certificate to identify themselves, as well as servers supplying a certificate to use for encryption.

Clients can make a plain HTTP call and Istio will actually send it as an encrypted HTTPS request with a client certificate attached. Servers listen for HTTP traffic, but the Istio proxy for the server is listening for HTTPS - providing its own certificate and checking the client certificate:

Istio can do that because it owns both sides of the conversation. It’s like a person-in-the-middle attack, except that it increases security, instead of stealing bank details. The big advantage here is that Istio manages all the certificates. Every component registered with the mesh gets its own TLS certificate and Istio manages the storage, distribution and rotation of the certs.

The default Istio setup enforces mutual-TLS for all services registered with the mesh, but it allows Pods which are not registered with the mesh to use plain HTTP. That lets you mix Istio and non-Istio services in your cluster and you don’t need any extra configuration if that’s the security model you want. You can change that setup and block any non-Istio traffic with more YAML, specifying a PeerAuthentication policy:

apiVersion: security.istio.io/v1beta1

kind: PeerAuthentication

metadata:

name: default

namespace: istio-system

spec:

mtls:

mode: STRICT

Strict mode rejects any requests which aren’t using mutual-TLS. This example applies strict mode across the whole cluster but you can be more selective and configure it for specific namespaces or individual services.

Once you have mutual-TLS in place, you can also model authorization, because clients and servers can be uniquely identified from their certificates. You can specify rules with an AuthorizationPolicy to explicitly allow or deny traffic. You might use that to restrict access to the user details service - so it can be called by the website but not by any other component.

Istio also supports end-user authorization, so you can allow or deny access to services for individual users. That relies on a JSON Web Token (JWT) in the header for incoming requests, listing claims about the user. You configure trusted JWT issuers and then you use the claims in the token to enforce authorization policies.

Monitoring Communication and Performance

You can see how a central component managing the network is incredibly powerful for layering on infrastructure-level concerns like routing, failure management and security. Istio has a lot more features to explore, but the last one we’ll cover here is observability.

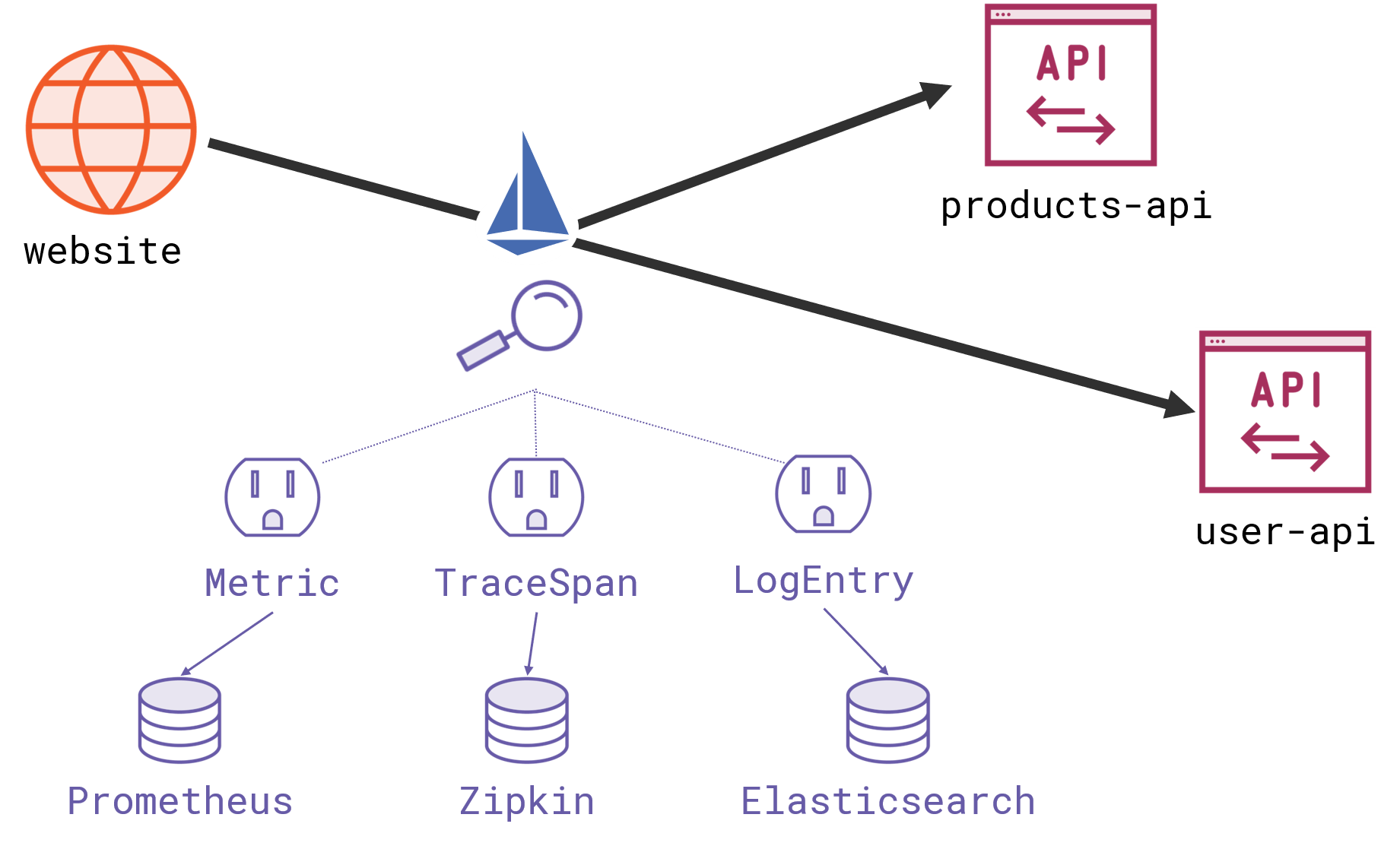

All your network traffic passes through Istio, and the proxies can be configured to send metrics and logs to backend services. Istio supports standard backends here like Prometheus and Zipkin so you can build up highly detailed visibility into your service communication:

You get a set of Grafana dashboards to monitor the health of your services, showing the number of requests per second, the success rate and duration of calls. That’s all information which Istio can collect from the proxies, so you don’t need any code in your clients or servers to get that level of detail, which makes Istio a great way to add instrumentation to apps which don’t have any.

The next level of detail is distributed tracing, which gives you a breakdown of all the network calls made from an initial parent call. A request for a web page could trigger multiple service calls from the website; Istio can track all those and show you where the time is being spent in a tracing UI like Jaeger. You need some code changes for this, to store request information in HTTP headers, but there are client libraries in all the major languages to do that for you.

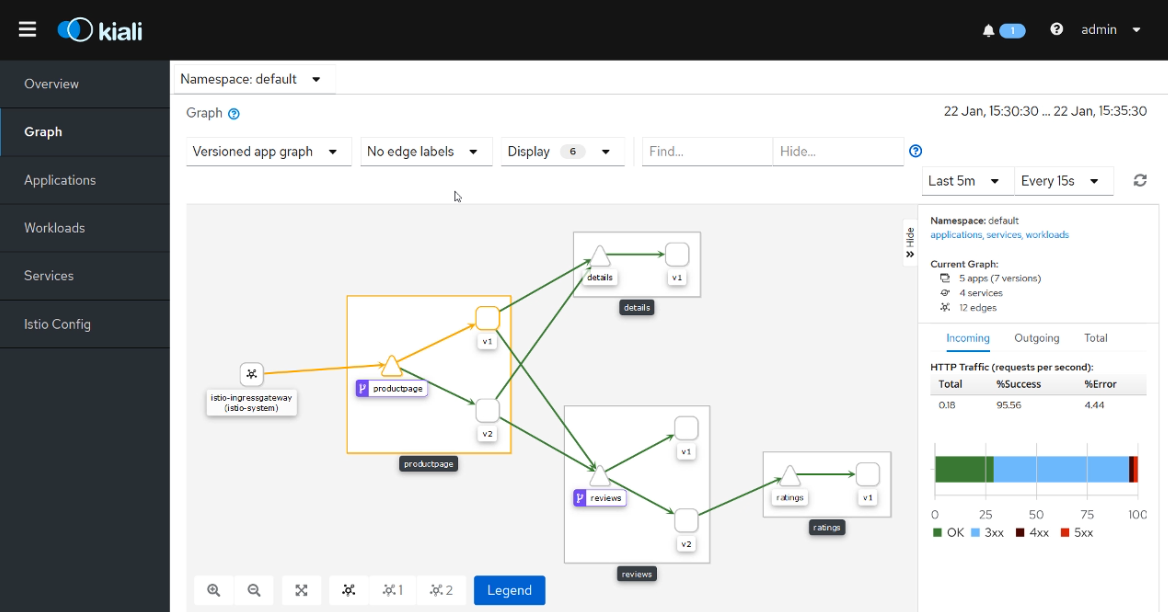

If you don’t need that level of detail, you can still get great insight into the communication in your app using Kiali - an add-on for Istio which gives you a real-time view of traffic in your mesh:

This image (snapped from the observability module of Managing Apps on Kubernetes with Istio) shows a canary deployment in progress, and in the Kiali UI you can track the percentage of traffic going to each version of the services.

This all comes with no code changes and no additional setup in your application model, but it’s not completely free. Monitoring can add a lot of compute overhead, so all these observability features are optional. You’ll only enable the ones you need.

Istio vs. The Others

Istio is not the only service mesh - the main alternative is Linkerd, which is simpler and typically more performant, but it doesn’t have all the features of Istio. Traefik Maesh works in a different way, using a shared network proxy for every service running on a machine. Open Service Mesh and the Service Mesh Interface are establishing a standard model to describe service mesh features, independent of the actual mesh technology.

Bringing a service mesh into your architecture brings a significant dependency. If you use it for routing, security and observability then none of those features are in your application code. If you then decide against using the mesh, you need to go back to your services and write all that code. It’s good to have different options so you can evaluate different mesh technologies, but in reality that list will probably only contain Istio and Linkerd - the others don’t have the same maturity or community.

There the choice will really come down to features versus complexity. Linkerd is easy to get started with, and gives you traffic routing, security and observability - but without all the functionality of Istio. For authorization, circuit breaking, fault injection and more routing options, Istio is the most complete service mesh.